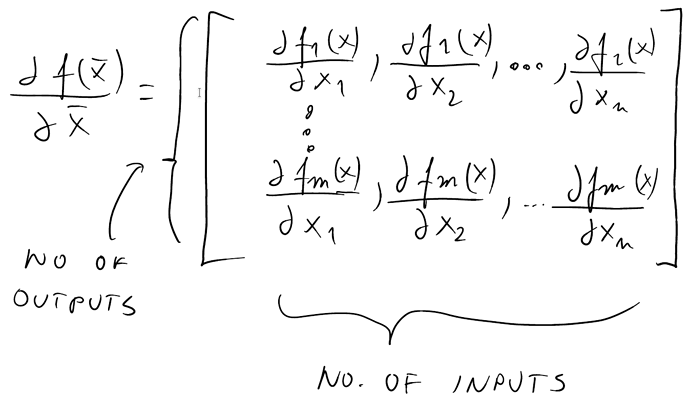

I guess part of the difference can be explained by different renderers, too. Seems the Onyx output is done with thicker strokes (about 2 vs 1 pixels), and while Onyx has done anti-aliasing in there, the effect somehow seems to be with stronger contrast (looks more “pixelized”).

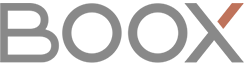

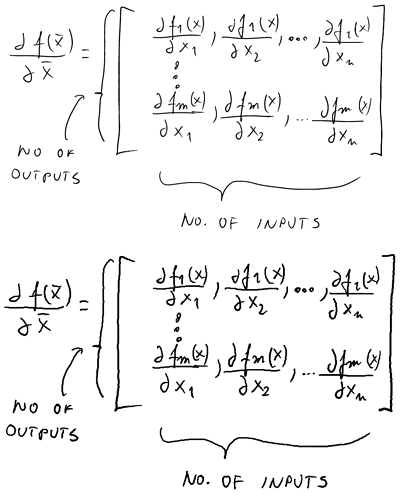

That said, even with its simplicity, that filtering method gives surprisingly effective results evening out the curves and occasional one-pixel jitters. The differences between the “p” letters of “outputs”, or the top ‘d’ of “dfm(x)/dx2” (not bothering to lookup and paste the correct symbol for the not-really-d) are quite telling.

However, it seems to shorten the ends? E.g. the d’s of the bottom row dx2 and dxn have their end (or begin, depends on how one writes it) shortened by couple pixels. Without reverse-engineering the code (I’m not familiar with that language), I’d throw a guess for a reason: the averaging is handling the end points somehow incorrectly.

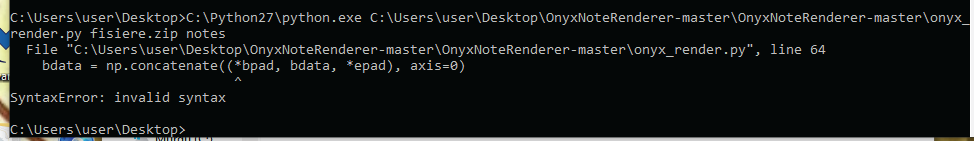

What little I can decipher from the code, it tries to do something (padding extra entries with weighted values) to handle them properly, but, seems it only pads with the same end-point value (weighted), which would lead to small shift towards the next points, i.e. shortening… but would it shorten that much?

Hmm… perhaps padding with a mirror image of the second point (or second last) could give better result? (With my out of the sleeve pseudo-code something like:

bpad = (first - (second-first)*before/2) * before

Leaving it like that, uncleaned, to show the idea; flipping the next point as mirrored on the first point, amplified one quarter of the window size more away, with half window size weight. As if the stroke had started half the window amount of points earlier, going straight, through first to second point. (Similarly for the end point, of course.) Edit: that should keep the length of the ends roughly correct, but can do a little bit of smoothing.